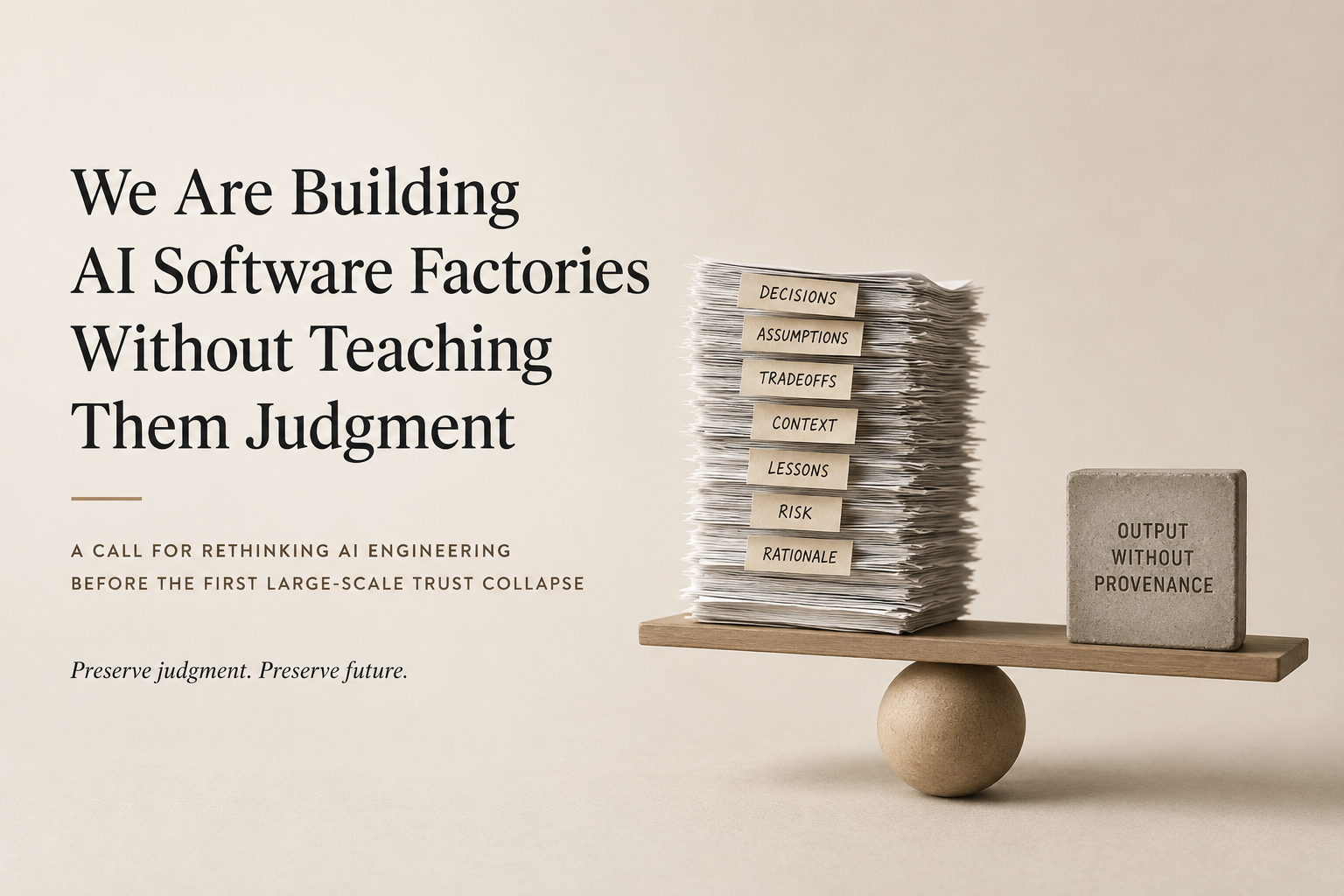

We Are Building AI Software Factories Without Teaching Them Judgment

Author: Yauheni Kurbayeu

Published: May 13, 2026

LinkedIn

A Call for Rethinking AI Engineering Before the First Large-Scale Trust Collapse

The industry is celebrating a strange milestone.

We finally taught machines how to produce software at industrial scale.

Multi-agent coding systems now:

- write features,

- refactor repositories,

- generate tests,

- review pull requests,

- migrate frameworks,

- and orchestrate delivery pipelines faster than many teams imagined possible just two years ago.

And almost nobody is asking the uncomfortable question hiding underneath all this acceleration.

Who is teaching these systems judgment?

Not syntax.

Not orchestration.

Not benchmark performance.

Judgment.

The Dangerous Analogy Nobody Wants to Discuss

Right now, the AI industry increasingly resembles a hospital that decided to massively accelerate surgery throughput by giving scalpels to brilliant medical graduates immediately after university graduation.

The students have:

- perfect exam scores,

- flawless anatomical knowledge,

- excellent simulation performance,

- and complete recall of procedural best practices.

But no experienced hospital in the world would allow them to independently perform heart surgery on day one.

Why?

Because medicine understands something software engineering still tries to ignore:

There is a massive difference between knowing how to execute a procedure and knowing when, why, and under which conditions that procedure should happen.

That difference is accumulated judgment.

And accumulated judgment is mostly compressed decision history.

Clinical Intuition Is Compressed Provenance

Experienced surgeons do not operate purely from textbooks.

They operate from:

- thousands of prior decisions,

- failures,

- tradeoffs,

- near misses,

- complications,

- procedural adaptations,

- and contextual signals accumulated over years.

What medicine calls clinical intuition is often highly compressed decision provenance.

A virtual gut feeling built from preserved decisions.

Yet in software engineering, especially in the AI era, we are industrializing execution while almost completely ignoring the preservation of judgment itself.

The Industry Is Obsessed With Visible Acceleration

The current AI conversation is dominated by:

- autonomous agents,

- software factories,

- orchestration frameworks,

- model capabilities,

- productivity gains,

- coding velocity,

- and cost reduction.

But very little attention is being paid to the invisible layer underneath the output:

- why decisions were made,

- which assumptions shaped them,

- what tradeoffs were accepted,

- which alternatives were rejected,

- whether the original reasoning is still valid,

- and how future systems inherit those decisions safely.

This omission is becoming dangerous.

Organizational Memory Is Quietly Disappearing

The more capable AI systems become, the more organizational memory starts silently disappearing behind generated outputs.

The problem is subtle at first.

An AI agent introduces a workaround because “the code works.”

Another agent later optimizes around that workaround without understanding its original constraint.

Months later, a third system treats the workaround as architectural truth.

Eventually nobody remembers:

- whether the behavior was intentional,

- whether the tradeoff was temporary,

- whether the policy changed,

- whether the original risk still exists,

- or whether the entire reasoning expired long ago.

The system still functions.

But the judgment lineage is gone.

And once that lineage disappears, organizations begin operating on inherited decisions they can no longer explain.

Most Engineering Judgment Is Inherited, Not Recomputed

This is the part many current AI discussions dangerously underestimate.

People assume sufficiently advanced AI systems will simply “reason everything again from scratch.”

But senior engineers know real systems do not work like that.

Organizations run on compressed historical context:

- past incidents,

- production scars,

- operational constraints,

- regulatory compromises,

- failed migrations,

- scaling lessons,

- political realities,

- and institutional memory.

Most engineering judgment is inherited, not recomputed.

What we casually call “gut feeling” is often years of unresolved decision provenance compressed into intuition.

Medicine Already Solved This Problem Culturally

Medicine scales expertise through:

- supervised practice,

- peer review,

- case histories,

- institutional memory,

- procedural lineage,

- admissibility boundaries,

- and accountability structures.

It does not scale by pretending intuition is unnecessary.

Software engineering, meanwhile, increasingly behaves as if execution alone is enough.

As if:

- generating correct-looking outputs guarantees correct reasoning,

- local optimization automatically produces globally coherent systems,

- and organizational memory can safely evaporate because the next model iteration will “figure it out.”

We Are Scaling Junior Engineering

Software factories without preserved judgment are not senior engineering at scale.

They are junior engineering at scale.

Fast junior engineering.

Industrialized junior engineering.

And the danger of junior engineering is not that it always fails immediately.

The danger is that it confidently succeeds right until the accumulated invisible mistakes become systemic.

Why Decision Provenance Matters Now

Decision provenance is not:

- corporate governance theater,

- another observability dashboard,

- or a compliance exercise.

It is the missing layer required for AI systems to inherit organizational judgment instead of merely inheriting outputs.

Because the real question of the AI era is no longer:

“Can machines generate software?”

That question is already being answered.

The real question is:

“How do we preserve and transfer engineering judgment once software generation becomes cheap?”

How do we ensure future agents inherit not only what was built, but why it was built that way?

How do we preserve tradeoffs before they dissolve into generated code?

How do we prevent organizations from becoming amnesiac systems operated by increasingly capable execution engines?

And perhaps most importantly:

How do we build virtual gut feeling without turning AI systems into opaque superstition machines?

The Industry Is Still Too Early to See the Risk

The challenge is that governance conversations rarely become mainstream during the expansion phase of a technological wave.

They become mainstream after the first large-scale trust collapse.

After the first preventable disaster.

After the first invisible reasoning failure with visible consequences.

The uncomfortable reality is that the AI industry is currently preparing operating rooms at industrial scale while barely discussing how surgical judgment itself will survive automation.

And by the time the first large failures force the conversation, the systems may already be deeply embedded into the foundations of how organizations think, build, decide, and operate.

Final Thought

The time to preserve judgment is not after the surgery begins killing people.

The time is now.