Why Humans Think They Remember Everything, And Why SDLC Memory Proves They Don’t

Author: Yauheni Kurbayeu

Published: Feb 22, 2026

LinkedIn

There is a quiet illusion inside every engineering organization. We believe we remember.

- We believe we remember why we chose this architecture.

- Why that constraint exists.

- Why that incident really happened.

- Why we split EU from US logic.

- Why we postponed that migration.

But cognitive science says something very uncomfortable.

According to cognitive psychology research on working memory capacity, our active working memory can typically retain approximately four meaningful chunks of information at any given time. Not forty. Not four hundred. Four. Everything else is reconstructed from fragments, habits, and narrative glue. And unless we rehearse or externalize information, it decays quickly. That’s not a leadership flaw. That’s biology.

Now contrast this with modern AI agents. Claude can operate with 200K tokens, sometimes even 1M. Codex supports 400K. That sounds enormous compared to a human brain juggling four active constraints.

But here’s the twist.

Even that is not enough for a living SDLC.

The Scale of Real Delivery Memory.

Let’s take a very ordinary setup: three teams, fifteen engineers, two-week sprints. Nothing extreme. No hyperscale complexity. Just a healthy SaaS product evolving in production.

If you compress work into decision-grade summaries, such as tickets distilled to intent, PRs summarized by impact, ADRs captured with alternatives, incidents structured with causes and mitigations - you still generate a surprising amount of structured reasoning material.

In practice, that means roughly 150 tickets per month across teams. Around 200–250 pull requests. A handful of ADRs and architectural conversations. A few production incidents. Sprint planning outcomes. Review decisions. Risk acceptances.

When you condense all of that into canonical, structured artifacts, not raw Slack noise, not logs, but reasoning-ready memory - you land at roughly 200,000 tokens per month.

Not theoretical tokens. Real delivery memory.

At enterprise scale, with 80 engineers and constant cross-team architectural churn, that number climbs to one to one and a half million tokens per month.

Now compare that to context windows.

Two hundred thousand tokens barely fit inside some modern models. Four hundred thousand buys you more breathing room. One million looks generous.

But here is the structural reality: a single quarter of delivery memory already exceeds what comfortably fits into one session window. And sessions reset.

A 400K window buys you time. It does not buy you continuity.

This is the same limitation humans face, only at a different magnitude.

The Real Problem Isn’t Storage.

Interestingly, storage is trivial.

At your current scale, a full year of curated SDLC memory, including embeddings and graph relationships, might consume less than 70 MB. Even at enterprise scale, you are still comfortably under half a gigabyte for structured memory.

The infrastructure cost is negligible.

What is expensive is cognitive continuity.

When memory lives only in people’s heads, every director change resets context. Every incident repeats prior mistakes. Every architectural debate replays arguments that were already resolved six months ago. Teams rediscover constraints as if they were new insights.

You don’t have a memory problem because you lack documentation.

You have a memory problem because you lack structured, queryable, causally linked memory.*

Why Bigger Context Windows Won’t Save Us

Increasing context windows feels like progress. And it is progress. But it is horizontal scaling of the wrong layer.

A large context window is still session-bound. It is still transient. It still resets.

Without a durable structure, the agent forgets after the session ends. Without a durable structure, humans reconstruct from fragments.

We keep trying to solve continuity with larger prompts.

What we actually need is layered memory.

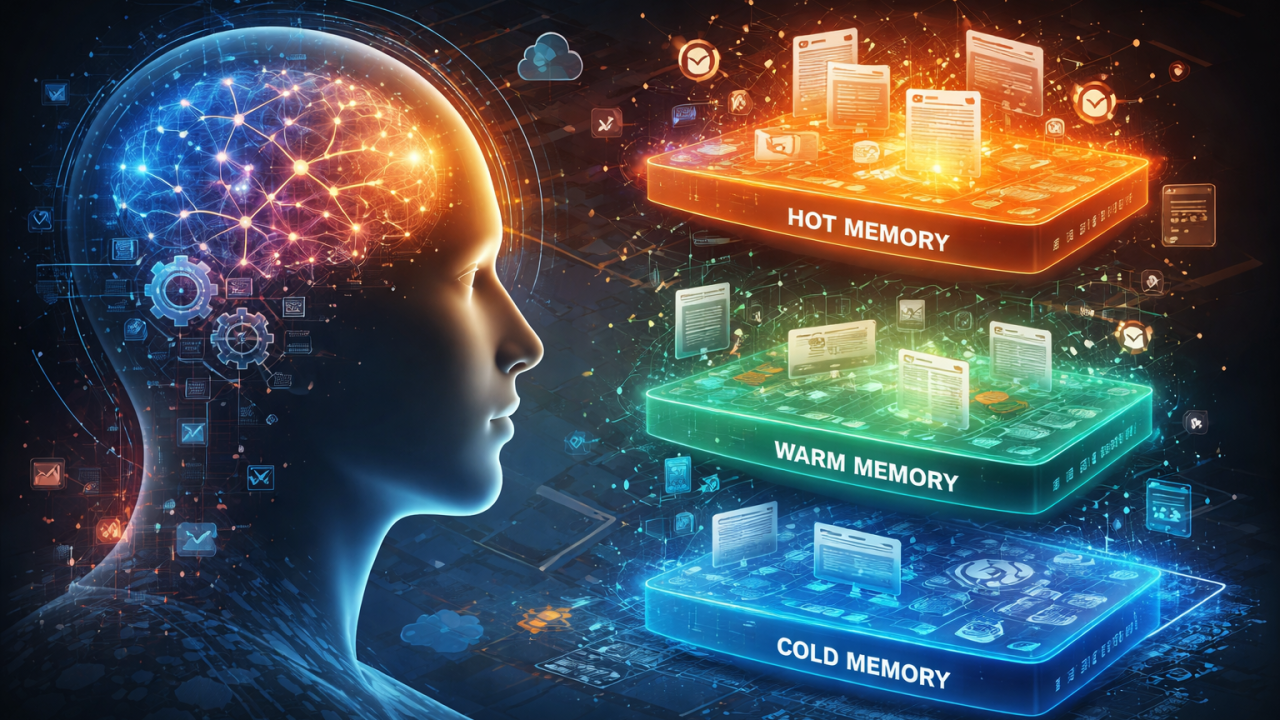

The Hot / Warm / Cold SDLC Brain

If we treat SDLC like a living system, memory must behave like a nervous system.

Hot memory is what’s happening now.

- The current sprint.

- Open incidents.

- Active risks.

- Unresolved questions.

- It is fluid, updated daily, and small enough to inject into an agent session.

- This is your operational working set.

Warm memory is the product’s evolving brain.

- Architectural decisions.

- Trade-offs. Postmortems.

- Compliance constraints.

- Ownership boundaries.

- Canonical artifacts such as Decisions, Questions, Risks, Actions, ADRs, and the relationships between them.

This layer spans months. It evolves, but it does not vanish. It is not injected whole; it is retrieved selectively.

Cold memory is evidence.

- Raw Jira threads.

- Slack transcripts.

- PR diffs.

- Recordings.

- Logs.

- Immutable proof of what happened and why.

- Rarely injected directly, but always linked.

The key insight is simple.

Hot moves. Warm stabilizes. Cold preserves.

Agents query across tiers. Humans reason across tiers. Neither should rely on recall alone.

The Missing Layer: Provenance.

The real power does not come from vector search alone.

It comes from relationships.

Many teams assume RAG solves continuity. It helps, but similarity is not an explanation. Finding a “similar ticket” is not the same as understanding why a constraint exists.

Every meaningful decision must know:

- Who made it.

- When.

- Based on what evidence.

- What alternatives were rejected.

- What it impacts.

- What risks it mitigates.

- What incidents it later contributed to.

- Whether it superseded a prior decision.

That is where graph structure becomes essential.

- A vector database helps you find “similar ideas.”

- A graph database helps you answer “why does this exist?”

Vector search retrieves relevance. Graph traversal reconstructs causality.

When you combine both, you stop searching for text and start traversing reasoning.

That is when SDLC becomes traceable.

What Happens When Teams Shift Toward AI Agents?

Now imagine a future that is not hypothetical, a near future that many leaders are already sensing. With AI agents becoming increasingly capable, surveys show that large proportions of developers and engineering teams already rely on AI in daily workflows, with some estimates indicating that more than 80% of engineers regularly use AI tools to assist with coding and development tasks.

There are even voices suggesting that in a few years, many traditional software engineering tasks will be largely automated by agents, leaving humans focused more on planning, design, and oversight rather than typing code themselves.

In this scenario, where 50–80% of day-to-day coding and execution work is handled by AI agents, the provenance problem becomes dramatically more acute. When human engineers write fewer lines of code and spend more time directing autonomous agents, the decision context and rationale are even more essential:

- The work product itself ceases to be the primary artifact.

- What matters becomes why and how an agent chose a particular implementation, not just what it produced.

Even studies that find AI tools boost individual or team productivity (with increases often ranging from roughly 20–60% or more) also highlight that developers end up spending more time overseeing work, validating outputs, and fixing agent-generated issues - the kind of higher-order reasoning that cannot itself be automated without memory of prior context.

AI agents can recognize patterns and write code, but they do not inherently carry organizational goals, product strategy, or the tradeoffs of past decisions unless those are explicitly encoded into a structured provenance layer.

This shift magnifies the necessity of the Warm Provenance tier:

- When agents create code autonomously, you must know what led to that decision every bit as much as what the code does.

- When agents replace repetitive tasks, humans are left with exception handling, strategy, and orchestration - the aspects that are hardest to recall without structured memory.

- When a product needs to prove compliance, explain risk mitigation, or reconstruct intent months later, the provenance graph becomes the only reliable record.

Without it, organizations risk building opaque systems that even their creators cannot fully justify.

Why This Matters More in the AI Era?

As AI reduces execution cost, delivery speed converges across organizations. Code generation improves everywhere. Test creation accelerates everywhere. Documentation drafts itself.

What does not converge is memory discipline.

- Teams without structured SDLC memory re-debate past decisions.

- They reintroduce mitigated risks.

- They repeat architectural mistakes.

- They lose context every time leadership rotates or a key engineer leaves.

Teams with layered provenance explain decisions under pressure. They onboard engineers and AI agents into reasoning instead of folklore. They allow AI agents to operate within real historical grounding. They maintain governance continuity even as people change.

The difference is not tooling.

It is structural memory.

Why This Matters for Leaders

If you are managing multiple teams, especially in a compliance-heavy SaaS environment, you are already simulating this system in your head.

You remember which dependency is fragile. You remember which agent workflow produced a problematic release. You remember why EU logic behaved differently in the last rollout.

But your brain is not a durable store. It is a reasoning engine with a tiny active window.

The cognitive load you feel is not a weakness.

It is structural overload.

You are holding roughly 200,000 tokens per month in a four-chunk processor.

In a future where AI agents perform half or more of the execution work, that memory burden does not vanish. It shifts from remembering code to remembering intent, causality, and justification.

Final Thought

Humans cannot remember everything. Agents cannot remember everything. Even million-token windows are not continuity.

But organizations can remember everything if they treat memory as infrastructure, not documentation.

Execution is becoming cheaper. Memory is becoming the differentiator.

In an AI-accelerated world, the teams with the strongest structured memory will not just move faster.

They will move consistently and responsibly. They will be the teams that can explain not only what was done, but why it was done, long after the code was written.