We are teaching AI to decide. But we are forgetting how to remember.

Author: Yauheni Kurbayeu

Published: Jan 3, 2026

LinkedIn

A while ago, I wrote that “SDLC has no memory”.

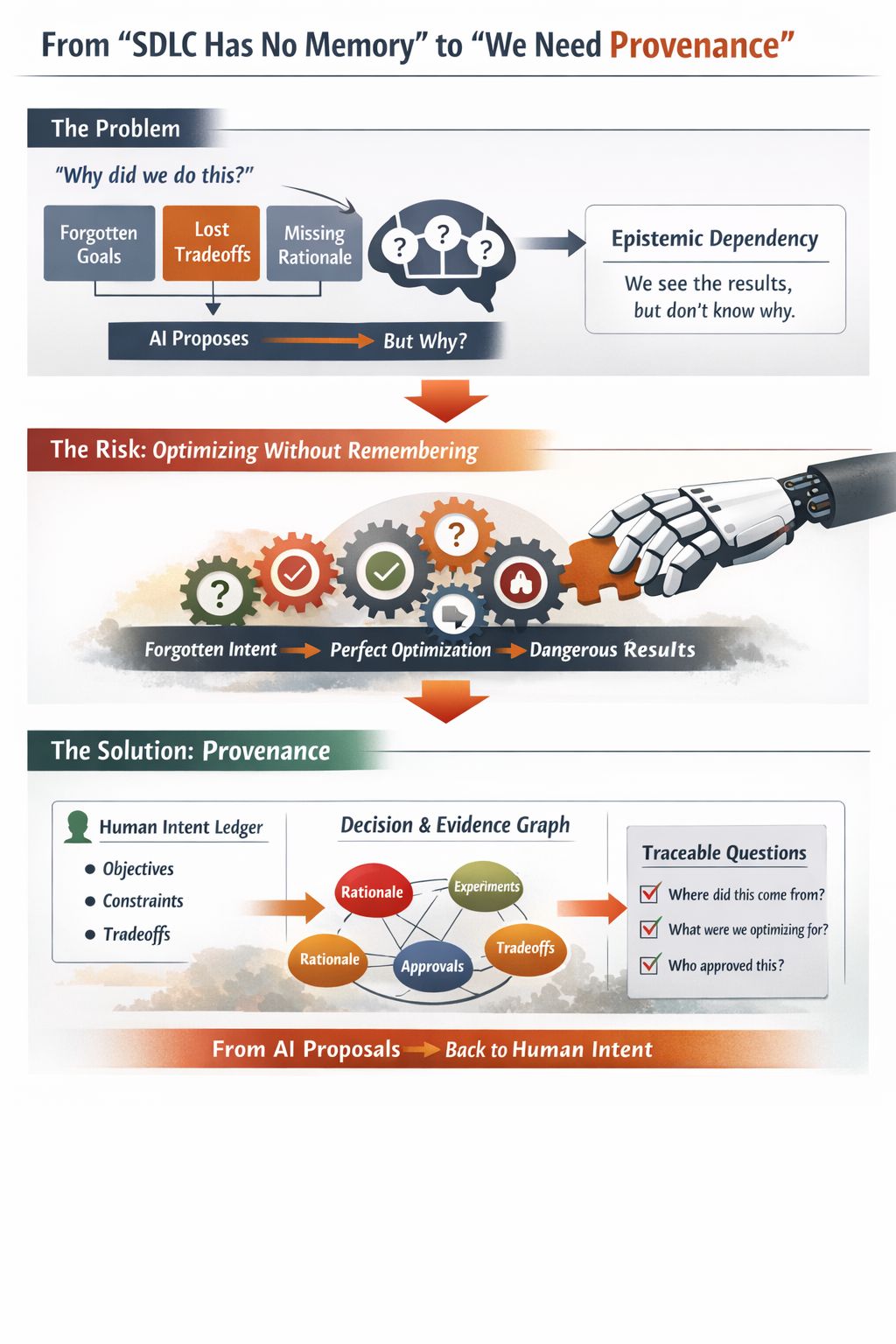

We don’t remember why decisions were made, which tradeoffs were accepted, or which constraints were explicit. Six months later, someone asks: “Why are we doing it this way?” And the honest answer is often: “We don’t really know anymore. But it works.” Until recently, this was “just” a delivery problem.

Now it’s becoming an AI safety problem.

We are starting to let AI:

- propose architectures

- optimize plans

- explore solution spaces humans can’t fully reason about

And we are slowly shifting:

- from: “We understand why this is the solution.”

- to: “The system found this. The metrics look good.”

That creates a comprehension gap: we see the outputs, but we no longer understand the reasoning.

The real risk is not evil AI. The real risk is: “We will build systems that perfectly optimize things we no longer remember choosing.” No consciousness required. Just optimization, feedback loops, and forgotten intent.

What’s missing is a new infrastructure layer: Provenance.

Provenance = the ability to answer: “Where did this decision come from, and what human intent did it serve?”

Not a wiki. Not Jira. Not Confluence.

But:

- A ledger of human intent

- A graph of decisions, evidence, and tradeoffs

- A trace from execution back to purpose

In a world where machines propose more and more decisions: The AI should not be the judge. Provenance should be the court record.

Before we talk about “AI alignment”, we must be able to prove:

- What are we actually trying to optimize for?

- What are the non-goals?

- Which constraints are sacred?

- Which tradeoffs were explicitly accepted?

Otherwise, we’ll get perfectly optimized nonsense.

We started with a delivery problem. We are ending with a governance and civilization-scale safety problem:

How do we make sure we still remember what we are trying to build when machines start building it with us?